The AI Diaries 🔗

As soon as it works, no one calls it AI anymore - John McCarthy

So I tend to avoid using the term AI but it's sometimes unavoidable. Right now I am being forced to spend considerable time using coding tools. And sometimes I like it, sometimes I think it's a bore, and almost always it wastes some of my time. At a minimum it makes up for all the time it wastes but it always creates more noise than value. I have a lot of anecdotes working in this space so I will land them here, at the edge of obscurity.

DevLog 🔗

06 03 2026 🔗

During the beginning of the hype cycle for "AI", the thing that turned me off was the noise about everyone having to become a "Product Engineer." It was the concept that the only value software provides is building products to be sold. Disgusting! (Obviously, I know what platform this is and how dumb this opinion is for this tribe)

It took a while and a few books, one specifically that told some stories of Grace Hopper that healed my issues. It was the realization that software and automation was to reduce drudgery not to create revenue. It was the need to reduce drudgery that results in positive outcomes and the reason people would pay for software.

Have your own opinions but I repeatedly seem to re-learn this lesson, my choices should be about my thoughts and those are validated by vetting the thoughts of others.

01 03 2026 🔗

I have been off in a microcosm of building PoCs for things, some of them useful:

Some not so much:

But coming back to the real world for a moment I am starting to see how I might be in a bubble, an AI bubble. I have simplified AI as a soft executor. Like a script with smart error handling. It's not conversational, it's imperative and command oriented. I think I am a control freak or maybe it's a matter of perspective scale, but I am preparing my statement to the model with a pre-defined expectation of the outcome. I also express the expected outcome and then explain the direction and then confirm the outcome in my statement. I am not looking for the model to introduce inspiration. I highly question the decision to allow the idea to flow from the model, because it often has some pretty bad ideas.

Its about reducing drudgery, Like I alluded to here. Having something of a long tail in this industry now I have seen the world when the product was hosted in the office on consumer hardware. I did the cowboy coding without version control, just FTP to the server. That's how debugging happened too sometimes. Then we got all full of ourselves, we needed more guardrails, we needed to support more engineers with lower skills, we needed to grow. That sounds like punching-down or retroactive gate-keeping that's not the point. There wasn't time to train people on or off the job. Bootcamps did their best to teach functional skills and get the butts in the seats but they were unable to embed what years of experience also provides. Being good thoughtful people we focused on how to make the work safer for more people and permit more capability with less experience. Then we over generalized, we produced specialization in managing tools and never built up the experience, it was abstracted away at a rate that required it to have more abstraction. So now what we have is a ton of drudgery, that hides its meaning so well it has become hard to use.

Regardless, we poo poo on shell scripts because they are brittle (citation needed). I have worked too many places where I was refused a merge due to the presence of a shell script because I could have written the same thing in Ruby or JavaScript. A language that the humans understood better and purported would be less brittle. Of course the brittleness is in the error handling not the language. So this is where I started thinking about llms as error traps for code, don't write a skill that does work, have the LLM write the code and then a skill to run the code. When the code breaks because of a bad filename or a missing system dependency it doesn't blow up. Instead the model takes over and either mitigates the flaw and follows the "spirit" of the code to get things done or just fixes the code.

Here is the thing, both of these are brittle solutions, one we expect to be brittle eventually, the other will be unexpectedly brittle but self correcting. I know the cheap seats will say, "by unexpectedly you mean it will rm -rf ~/". I mean maybe sure but that's pretty unlikely especially if you don't let it run rm -rf outside of $CWD or just not at all.

TLDR; Here is the point, we are always afraid of something, when it was humans we were afraid of the humans, now its robots and we are elevating the humans to the robots. What if we just all agreed that both are likely to screw everything up and take a chance to pivot. Excessive abstraction didn't fix the problem it didn't make the work easier or faster. It did make it consistent which is the thing to learn. Now we have robots and our purpose is to make them operate consistently. Time to break some rules and climb out of the pit of drudgery.

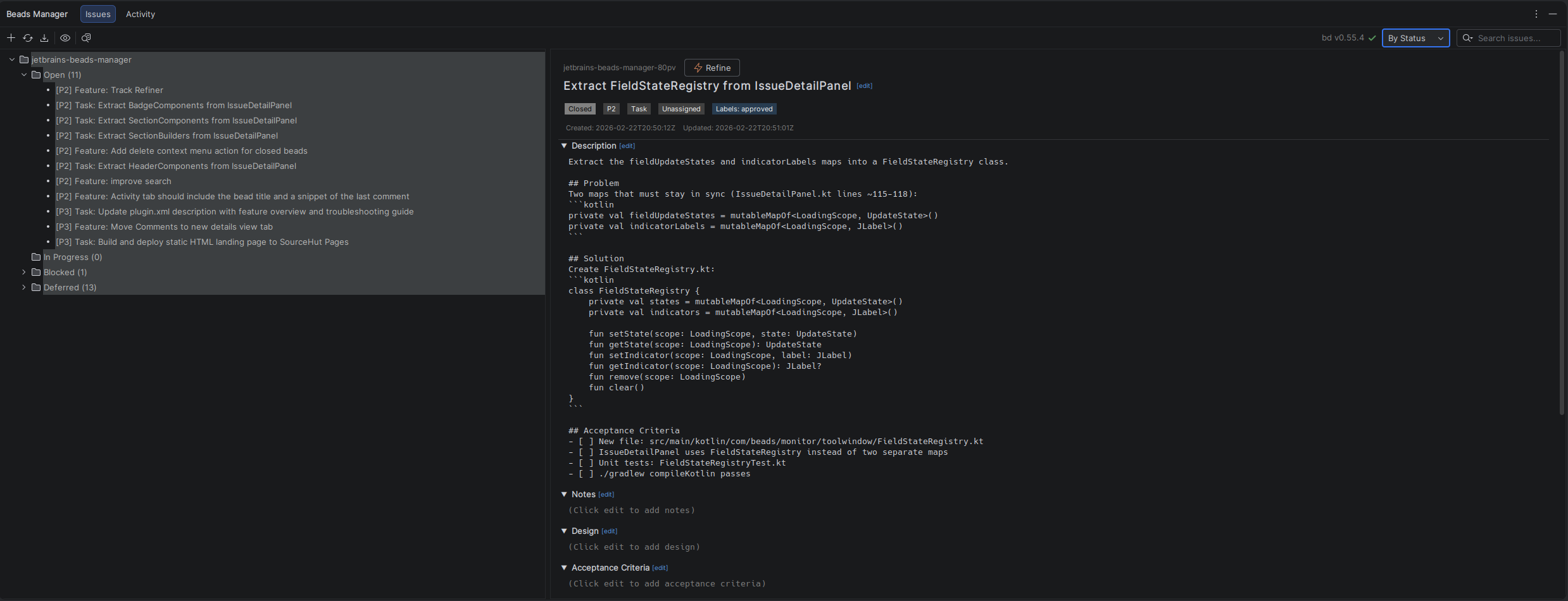

22 02 2026 🔗

Looking at the task, extracting 42+ fields and refactoring a 2300-line file in a single pass is ambitious. The agent likely ran into too many compilation errors and got stuck.

Let me mark this bead as blocked and continue with the other work. The user can decide how to proceed.

rwad failed again - no commits made. This is a complex refactoring (42+ fields in a 2300-line file). Marking as blocked.

For context this is the file in question com.beads.monitor.toolwindow.IssueDetailPanel which over the course of about 20 days of development this file represents the main interface for the beads manager.

There is a lot going on here but really rather mild compared to software I have worked on in the past. For this file only represents the right hand details. Which in the background includes things like partial editing, reloading, and partial refreshes. But there is little I can accept should cause this file to be 2300 lines. What brought us here was the discovery that regressions were introduced at each new feature added. Many would take 5-6 iterations for Claude to solve for. It becaue an enormous time suck.

The learning here is that there must be a pressure valve for refactors. I expect that we need systems that can observe file complexity once again. Like the days of old its probably necessary to guide coding agents to far more strict standards than the average developer. This wild growth is unsettling and in my past when working with younger devs it was tools like flog and flay that helped guide code growth.

As a very senior developer now these things seem natural, complexity is a way of life and as I always say software engineering is change management.

08 02 2026 🔗

I've been tracking beads data on the JetBrains Beads Manager plugin build and the numbers tell the 80/20 story pretty clearly.

Four days, 156 issues closed. Sounds impressive until you look at the breakdown:

┃ Features/Epics ┃ Tasks ┃ Bugs ┃

────────╋────────────────╋──────────────╋───────────────────╋────

Feb 5 ┃ ▓▓ 5 ┃ ░░░░░░░░ 24 ┃ ████████████ 29 ┃ 59

Feb 6 ┃ ▓▓▓▓ 12 ┃ ░░░ 9 ┃ ████ 12 ┃ 37

Feb 7 ┃ ▓ 4 ┃ ░░░░░░░░░ 34 ┃ ████ 12 ┃ 50

Feb 8 ┃ ▓ 1 ┃ ░░ 5 ┃ █ 3 ┃ 10

────────╋────────────────╋──────────────╋───────────────────╋────

Total ┃ 22 (14%) ┃ 72 (46%) ┃ 56 (36%) ┃ 156

Day 1 built the thing. 5 features, 24 tasks to wire them up, and immediately 29 bugs. Day 2 added more features - macOS compatibility, settings panels, refresh timers. Day 3 and 4? Chasing bugs and polish. UI stuttering, race conditions, tree selection quirks, scroll position resets.

56 bugs out of 156 total issues. That's 36% of all tickets just fixing what the agents broke while building features. And those bug tickets often took longer - VFS async race conditions, deprecated API replacements, multi-selection state management. The kind of stuff where the agent confidently implements the wrong fix and you're three attempts deep before finding the real problem.

The agents built a working plugin in a day. Then we spent three days making it actually work.

03 02 2026 🔗

This is just a thought process I go through with LLM generated code...

OK, I can produce more code than I reasonably can keep track of in a single session, which means there is always going to be some code I didn't read.

OK, I can always produce and keep in sync documentation about the code that is produced, ADRs and design docs. But if they are too long no one will read them. But at least there is some consumable record.

Kinda like a factory maybe stamping widgets, because this model of writing all the code all the time seems a little odd. I should be writing less code and there should be more shared code. If the product is the feature and the speed to market is what matters then the cost for encapsulation should go down. Modern products will end up as composable licensable modules.

This is kind of the path that infrastructure took, so why not product. Think about it, if we can remove the human ego from deciding on a solution then any solution is good as long as it can be wired into the product.

If code gen is expensive it's better to reduce the work and just contribute to open source.

I might have lost you there but hear me out: Just Forget About Owning Code

02 02 2026 🔗

On Sunday I spent some more considerable time building something dumb and noticed something interesting. While I had observed this before this was formal confirmation because I was able to encounter the same issue across multiple models. While I don't know what is the common source for coding training data but as a person who makes programs that do specifc non-business tasks it seems clear that all the models I have worked on so far don't know how to make a browser extension. Add it to the list of things like webrtc but in this case understanding Manifest v2 vs v3 is always a challenge. In most cases my usage for LLMs is to help get me past the hump of a new technology, traditionally if I know a technology I write the code myself. I have built a number of extensions with various LLMs and they always get trapped on CSP and manifest considerations. They also don't seem to understand anything about how the browser works outside the spec. An extension has to follow a bunch of rules that are bespoke to the application but these are unknown to the models training it would seem.

But $10 to build an HLS extractor is pretty cool.

30 01 2026 🔗

Success with coding agents is as expected completely bound to the quality of the model used. So much of how the agent works is dependent on the model architecture very little configuration work built for Claude will say work with Quen. But outside of foundation models tool use is quite limited for comodity hardware. Having taken a stab this weekend across a number of different models I can confirm that models focused on a task perform better than generalized foundation models.

A great example of this is a comparison of MiniMax and Qwen2.5 Coder vs Claude code. The tools are so completely similar that it really raised the differences between the models. One of the things that Claude Code has going for it is the user experience, its quite tight. But it also leads to some Apple like resistence. On the other hand Open code as a tool did all the same things sans agent generation skills but being able to switch between models was critical. I would use MiniMax2.5 for coding in one terminal and then Qwen or something smaller on a local machine. It was totally reasonable to have a cloud model doing the heavy lifting and a local model doing code reviews or writing comments.

28 01 2026 🔗

I gotta admit there is one thing about using AI coding tools that continues to be true no matter how much I try and constrain the model's failures I generally get similar results. If I don't know exactly what I want it to do and can provide a complex enough context the results will be that of an "Eager Intern" meaning I will get results that I didn't expect and when there were obvious places where the model should have stopped and asked questions it failed. I suspect that the model architecture was trained to focus more on task completion than task accuracy. I have a few times been able to get various agents to "give up" and tell me to try again. Of those Junie definitely does this and doesn't waste my time. Claude-Code though is too appeasing, it closes tasks without verification even when prompted to verify their work. Even with orchestration of multiple agents with fresh contexts, asking to build an app that isn't a todo-list will fail. This benefits the sale of coding tools, during evaluation it impresses with the ability to construct simple things but falls over when complex solutions are required. When I say complex I mean those that are generally novel or require doing interactions over APIs. It commonly produces boilerplate which I think is by design to influence the numbers for LoC for code generation stats. But insidiously, it is also there to obscure the solution it introduces.

A clear sign of AI code generation is bloat and intentional omissions. As of yet the only way I have found to avoid this omissions is to have the model show its work and put it in the clear view of me. So I can set it on a task and watch its completion, then ask it to review the goals and try again. This clearly sucks and I can introduce tools to guide it away from the problem but that's just a bad tool not something that is going to change the nature of my job. It is on the other hand an insult to my 20 year career and all the juniors that are unable to get a job because there is an assumption that if we just "trust me bro" enough it will work.

27 01 2026 🔗

If you work for a company that laid off all your juniors in the past year, it is unbelievably poor taste to continue posting about the merits of AI and vibe coding on a platform where the majority of folks are currently looking for full-time work and do not want to be beaten to death with constant AI thinkpieces. Where did human-centered go in 2026? Because all I've seen so far from C-suite leaders and middle managers is forgetting how they got to where they are now. - Jen Udan - REF

I have been thinking about it like this... consider some big enterprise makes this commitment, they have to get some financial approval for the act and may have committed to some outputs. Now let's say that AI is golf clubs and we just gave everyone a real nice set followed by, be good at golf by the end of the month. All this hype is just from people who own sporting goods stores. The latest debacle about cursor creating a browser without a human in the loop where it didn't compile and humans were in the loop still can land in the post truth world we live in. If my job was being told things are being accomplished and I get access to a todo-list that tells me my tasks are done it's gunna be real hard to not be attracted to such things.

I get to see the outputs of the C-Suite from time to time. The model tries to do the engineering work for me and guided by a visitor it often misses where the rules matter and where the rules can be bent.

It's this ^ a very enticing concept. What of course is missed is I have to keep watching the bots work and stop them from looping. I guarantee it will get better but if the need for progress is all we care about maybe we should be thinking back to something simpler. People of Process, if we need to get things done we need to cut the red tape not unroll all the red tape into a ball and then wonder why we can't find anything.

20 01 2026 🔗

This one is more just the fun of working with other engineers and AI. While I will not post the code I was impacted by the size of the rebase it caused and the need for me to rewrite my feature. The code the model wrote only cared about things working. It built 200 line blocks of deeply nested conditional logic into existing functions adding catch clauses for exceptions that mean another service has failed and should not be caught. The telling part is when we reviewed the code with the developer he was unable to explain the why these things existed. It's a noob mistake but it's one that AI tends to promote. The endless "Trust Me Bro," and instead this wasted 6 hours of developer time and 3 days in a feature rewrite.

I know there is a mentality that encapsulation adds to cognitive overhead in humans but it exists because 5 levels of if statements is higher. But what happens when the same code was reviewed by the same model that produced it. The code seems to make sense and without the context of the architecture aka we just focused the changes on a single file we end up with some real new debt.